Translating a shader from Blender to Unity / VSeeFace

Recently, I've worked with a few V-Tuber artists on face shading (or, more accurately, I listen to what they are talking about and ask questions), and as a part of that, I started translating aVersionOfReality's shader to Unity / VSeeFace.

This is very tricky for artists, as this requires knowledge of not only programming, but graphics programming, which is quite advanced. Luckily, this is my passion, my creed, my life, and more seriously my actual job in AAA game development, so I figured writing this could help that transition.

This article will get technical, however I'll try focusing on the practical aspects and explain it in plain terms. Don't be scared if you're not familiar with programming!

This has two main parts : how to feed the code into the engine, and how to translate it. After that I'll talk about some finer points, like user control and VSeeFace integration.

You can find the code itself here. Don't hesistate to shoot me questions on Twitter or Discord.

The shader we're going to translate

Before starting this, let's take a quick look at the shader we will be translating.

aVersionOfReality's shader computes new normals for the face of a 3D model, based on where we are on the face. This is done through math, and has a few specific steps for the parts like the nose. Please refer to his video for more details, although this is not needed for the translation itself.

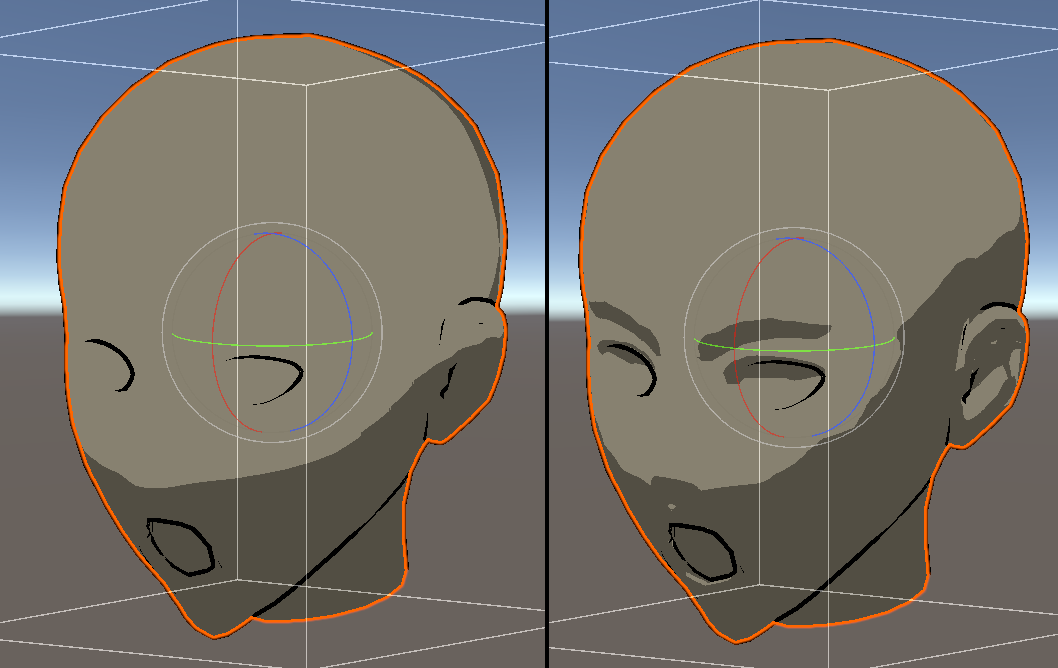

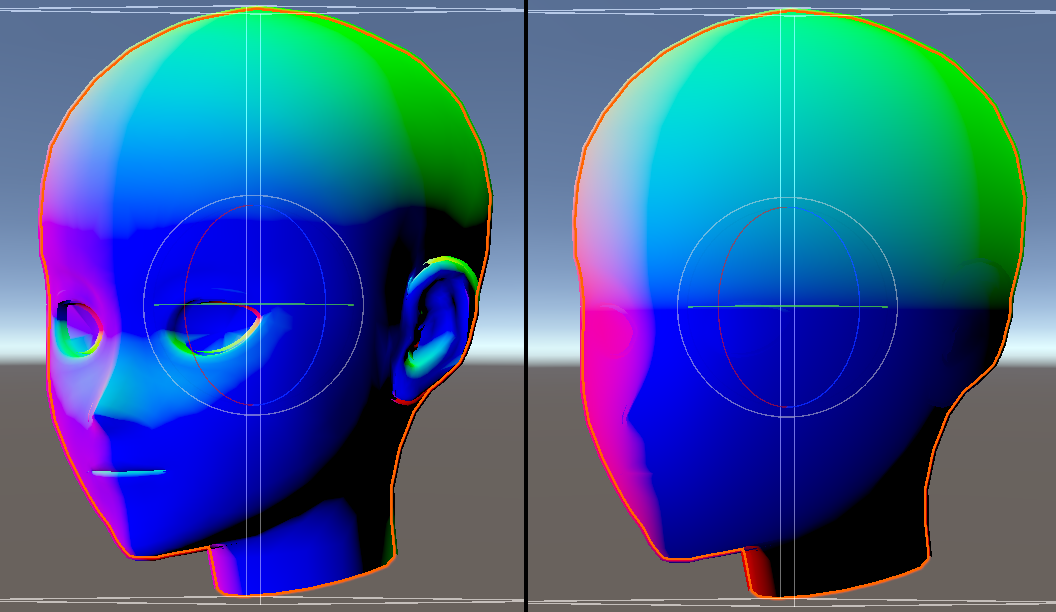

Screen from Unity. Original normals on the right, GenerateFaceNormals on the left.

What are normals ?

Normals are the vectors perpendicular to the face. They are needed for shading so that we can compute how the light reflects off a face, and thus what the final color will be. By manipulating them, we can adjust how the shading is done.

Integrating the shader into Unity

While computer science is about algorithms, programming is about where to put them, so that's what we're going to look at first : where to plug our shader. This is the part that is more engine dependant.

What is a shader ?

A shader is a program that will run on the graphics card, usually to compute images. It is most often written in glsl or hlsl, which are similar to C. These can be complex because you have to know how the machine works to really use them, but for our purposes today that won't be needed.

Using M-Toon as a base

Since our shader here only adjusts normals, we can plug it into pretty much any shader by replacing their "normal" variable. We're going to use MToon since this is a standard and already included.

To do that, let's start by copying the files to a separate folder. Then, we change the name of the shader at the top of the file so that it will show separately. Finally, we create a new material and assign it to our model. We are now ready to start.

Then, we need to locate where the normal is computed. I do this using the find / search function, and reading the code. Here is the code that actually manages this :

// From MToonCore.cginc

#ifdef _NORMALMAP

half3 tangentNormal = UnpackScaleNormal(tex2D(_BumpMap, mainUv), _BumpScale);

half3 worldNormal;

worldNormal.x = dot(i.tspace0, tangentNormal);

worldNormal.y = dot(i.tspace1, tangentNormal);

worldNormal.z = dot(i.tspace2, tangentNormal);

#else

half3 worldNormal = half3(i.tspace0.z, i.tspace1.z, i.tspace2.z);

#endifWhat does this code mean ?

This code has two parts, separated with preprocessor directives (lines starting with '#'). If

_NORMALMAPexists (ie. we have set a normal map texture). This first part will only be used when a normal map is set, and will use it to disturb the stored normal, while the second part will just use the stored normal directly when no normal map is set.

Now that we know that the normal is stored in worldNormal, we just need to put ours there and we're good!

Keeping it clean

Lets not bury our complex method in the middle of an already big file. Keeping the code clean will help us develop faster and reuse our code more easily.

In order to do that, we will make it a function and store it in another file, MToonGFN.cginc, in which we will put our main function: GenerateFaceNormals.

// At the top of the previous file, MToonCore.cginc

#include "./MToonGFN.cginc"

// [...]

// ------------

// Where the worldNormal code was

half3 worldNormal = half3(i.tspace0.z, i.tspace1.z, i.tspace2.z);

worldNormal = GenerateFaceNormals(objectCoords, 0.0, 0.0, worldNormal);

// [...]

// ------------

// In the new file, MToonGFC.cginc

half3 GenerateFaceNormals(half3 objectCoords, float fac, float mask, half3 regularNormals) {

// [...]

}This will allow us to keep our additions mostly in a single file, making it easier to parse, and we now can include this same file elsewhere, if we are injecting it in another shader.

Helping development : Debug Mode

Now, graphics programming can be hard to debug, especially when working with the less visible stuff like normals. That's why we are going to put some pathways in to show us the intermediate results as we work on them, by adding a variable we will call _gfnDebugMode, the idea being when it's value is different than 0, we show a specific piece of data instead.

The main mode we're going to add is showing the normal itself. There are two other modes in my code, but those are related to object space and specific parts of the algorithm.

if(_gfnDebugMode == 2)

return float4(worldNormal.xyz, 1.0);What does this code do ?

Here we start by checking if we are in the correct debug mode (2 in our case). If so, we stop the computation, and show only the value of

worldNormal.The exact reason why is slightly tricky : since the function the code is a part of must send back the final color, we tell it to both do that and stop further computations using the

returnkeyword, signifying the end of the function. This basically shortcircuits the remainder of the function.

And with that, we're now ready to start!

Translating the shader itself

So now we're getting to the algorithm part, the nodes themselves. Thankfully, at this point we don't really have a lot to think about, since the logic itself has already been done.

Therefore, it is simply a matter of taking each node one by one, and using the functions. However, sometimes those functions don't actually exists, and we need to create them, so I'll give a few tips. In addition to that, we can often do a bit more by optimizing stuff, but this is mostly outside the scope of this article.

If it's so simple, why isn't it automatic ?

I think it could mostly be, and it's what happens with node editors. However, there are complications. The first one is that you require the code of each node, which may or may not be available. The second one is that different rendering engines have different structures and particularities, some of which might not exist from one to the other, and thus are untranslatable or would need to do something different.

This is especially true when going from a non-realtime engine to a realtime one, as there are a lot more constraints and thus some methods don't work anymore.

Node subgroups as functions

params, output

A good point to start is to understand that nodes and node subgroups are functions. When working from aVersionOfReality's node graph, this is how I proceeded :

- I created a function for each subgroup.

- I took all the inputs and added them as parameters (with the correct type) for the function.

- For functions with one output, I used it as the return value. For those with more than one, I used the

outkeyword. - I then started translating the nodes one by one.

This translation can mostly be done using standard functions, and for the more complex ones you can make them as functions yourself to save time. Some nodes might have more complex math in them, which makes them trickier. Finally, the names of the functions and the parameters can be different and trip you up (like lerp/mix).

The basis problem

A tricky part is the fact that Unity and Blender don't use the same vector basis, Blender being right-hand Z-up, and Unity being left-hand Y-up. You might need a conversion in your code.

Since I didn't want to adapt all the complex math inside, I opted to convert them before and after my computation, so that I could do it with the Blender basis. This might not be needed for most shaders.

// Convert from unity coords to blender coords

worldNormal = half3(-worldNormal.x, -worldNormal.z, worldNormal.y);

// This function now uses blender coords.

worldNormal = GenerateFaceNormals(objectCoords, 0.0, 0.0, worldNormal);

// Convert from blender coords to unity coords

worldNormal = half3(-worldNormal.x, worldNormal.z, -worldNormal.y);Matrix transforms

This can be complex if you're not used to it. Since this is a specialized subject, I won't explain it here, but I will show you the syntax. This is part of what drives the "head tracking" of the shader.

// Example with a rotation matrix

float cosPitch = cos(radians(_gfnEulerAngles.z));

float sinPitch = sin(radians(_gfnEulerAngles.z));

// Matrix declaration

float4x4 rotationMatrixPitch = float4x4(

1.0, 0.0, 0.0, 0.0,

0.0, cosPitch, -sinPitch, 0.0,

0.0, sinPitch, cosPitch, 0.0,

0.0, 0.0, 0.0, 1.0

);

// ObjectCoords is a float4.

objectCoords = mul(rotationMatrixPitch, objectCoords);Additional topics

Here are a few finer points.

User Friendliness

If you're releasing your code for other artists, requiring them to take a deep dive into your code is going to restrict your method's use. Having it simpler to use also allows you to make faster iteration, which is important for the final quality.

This however can go further into the realm of tool programming, which is an actual job. However, something simple you can use is gizmos, the interface elements you can draw in the editor. For aVersionOfReality's shader, I've opted to show the range of the "empty object", as this is an important part of the algorithm and needs to be manually adjusted. Here is the annotated code for the script:

using System.Collections;

using System.Collections.Generic;

using UnityEngine;

public class GFNViewer : MonoBehaviour

{

// I take a reference to the Material I'll adjust, and the head bone since I need it.

public Material gfnMaterial;

public Transform headBone;

// You need a function nammed exactly like this for gizmos to work.

public void OnDrawGizmos() {

// This is a safety that won't draw anything until the needed parameters are correct.

if(gfnMaterial == null || headBone == null)

return;

Gizmos.color = Color.green;

// This algorithm will display the position of the box, for that, I need the parameters from the material, that I get with methods like GetFloat.

Vector3 centerRaw = new Vector3(

gfnMaterial.GetFloat("_gfnObjectCoordsTranslateX"),

gfnMaterial.GetFloat("_gfnObjectCoordsTranslateY"),

gfnMaterial.GetFloat("_gfnObjectCoordsTranslateZ")

);

Vector3 scaleRaw = new Vector3(

gfnMaterial.GetFloat("_gfnObjectCoordsScaleX"),

gfnMaterial.GetFloat("_gfnObjectCoordsScaleY"),

gfnMaterial.GetFloat("_gfnObjectCoordsScaleZ")

);

Vector3 center = new Vector3(-1.0f*centerRaw.x, -1.0f*centerRaw.y, -1.0f*centerRaw.z) + headBone.position;

Vector3 scale = new Vector3(2.0f/scaleRaw.x, 2.0f/scaleRaw.y, 2.0f/scaleRaw.z);

// Finally, I can draw my cube + lines at the center for clarity.

Gizmos.DrawWireCube(center, scale);

Gizmos.DrawLine(center + Vector3.right * scale.x, center + Vector3.left * scale.x);

Gizmos.DrawLine(center + Vector3.up * scale.y, center + Vector3.down * scale.y);

Gizmos.DrawLine(center + Vector3.forward * scale.z, center + Vector3.back * scale.z);

}

}

VSeeFace

VSeeFace adds restrictions due to the fact that it uses UnityPackages, and thus can't include new scripts. This was problematic because I needed one to pass the transformation matrix for the head tracking.

The only way to fix that was to ask EmillianaVT directly to include a script that could do that in the next release of VSeeFace, so I cleaned up my script to be more generic and in line with the rest. That script is now included in the SDK as VSF_SetShaderParamFromTransform, and allows you to get the transformation matrices or their subparts directly into your shaders. Nice!

A complication I still haven't solved at the moment, is how the head tracking works exactly. From what I know of Unity projects, the engine will move the bones to the correct position at runtime, but this doesn't seem to happen here. I'll update the article when I have investigated the issue more.

Conclusion

There you have it: how to translate a shader from Blender to Unity. This can be tedious, technical, and really dependant on your project (therefore there might be a lot of blindspots in this article). However, advanced shaders can really bring your projects to life, therefore this can be a very powerful tool. If at all possible, I recommend working in a standard language directly to make the transitions a lot easier, but sometimes you have to go through this whole process.

Since this is a technical article, written by someone technical, aimed a people who might not be, there might be a lot of blindspots or parts that aren't explained properly. Don't hesitate to shoot me questions on Twitter or Discord, and thanks for reading!

Thanks to aVersionOfReality and Tanchyuu for welcoming me on the project!